Because I host a few websites, a private streaming service, and I like working with server gear

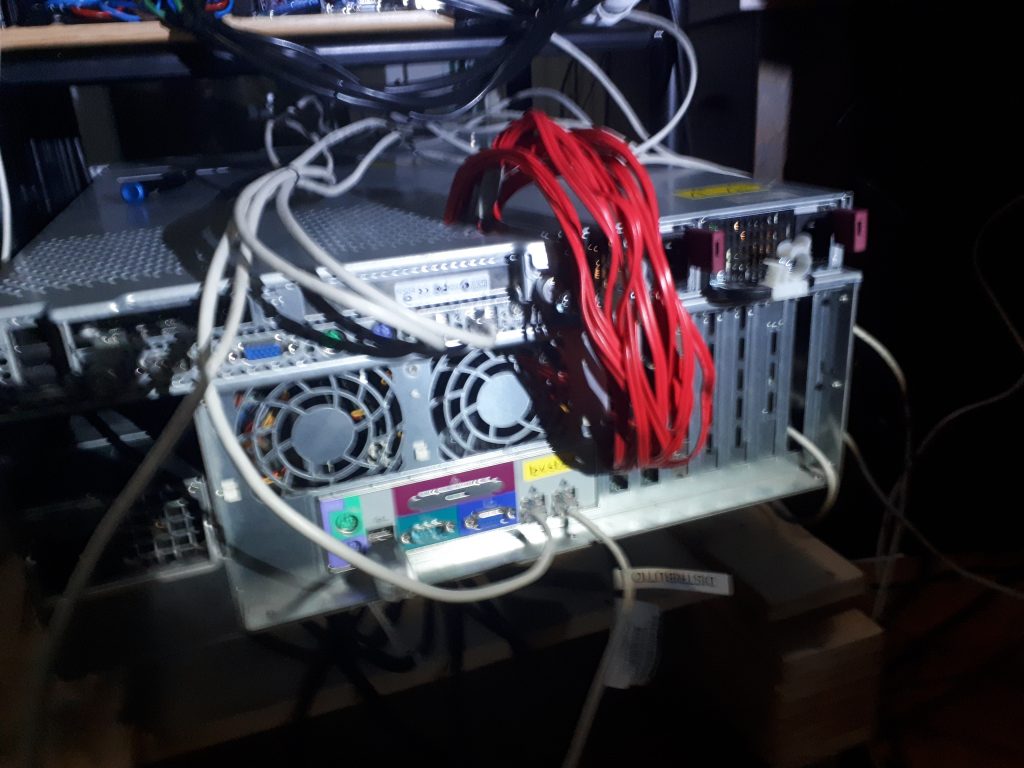

I build myself a small server rack.

The hardware

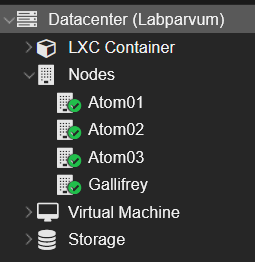

I currently have 4 nodes working in a cluster.

1 powerful node and 3 low power nodes

The nodes have the following specs

Main server – Gallifrey

CPU: Ryzen 5 3600

Memory: 128GB ECC

Storage: 2x 1tb NVME (Raid 1) + 4x 6tb hdd (RaidZ1) + 480GB ssd (ceph cluster)

Secondary nodes – Atom1, 2 & 3

nu691 single-board computer

CPU: intel N3350 dual core Celeron

Memory: 8GB

Storage: 128GB M.2 (boot drive) + 480GB ssd (ceph cluster)

Network: 2x 1gbe with RR link aggregation (1.4gbe speed max observed)

Ethernet switch: gs724t gigabit 24 port smart managed switch

UPS: ibm 2145 ups-1u

Cluster nodes

humble beginnings

The virtualization software

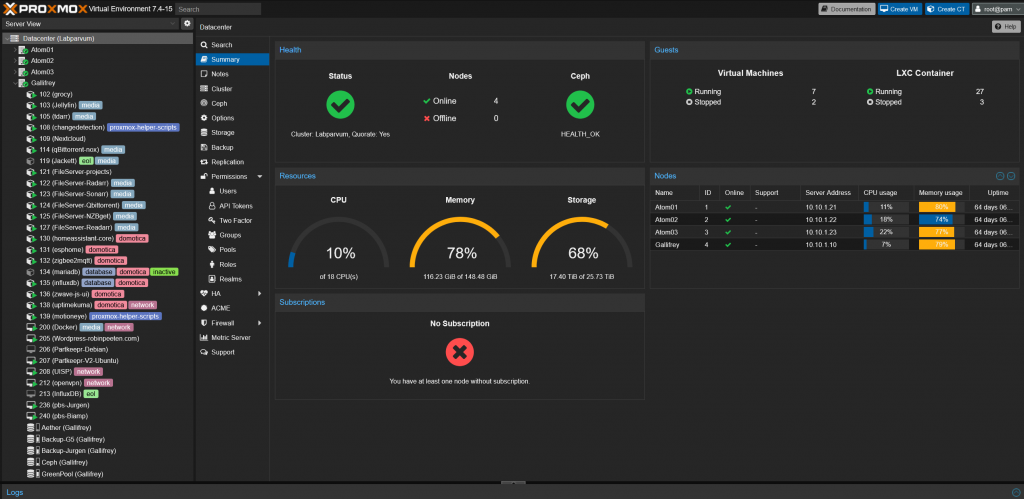

My server cluster uses Proxmox as its operating system.

I use Proxmox instead of other virtualization operating systems like esxi for several reasons.

Mainly because it is open source and all the “enterprise features” are free.

It is also pretty easy to use and with PVEDiscordDark the GUI looks amazing in my opinion.

Storage

I use a mix of ZFS and Ceph

ZFS is used for boot drives and bulk storage

Ceph is used for high availability and flexibility

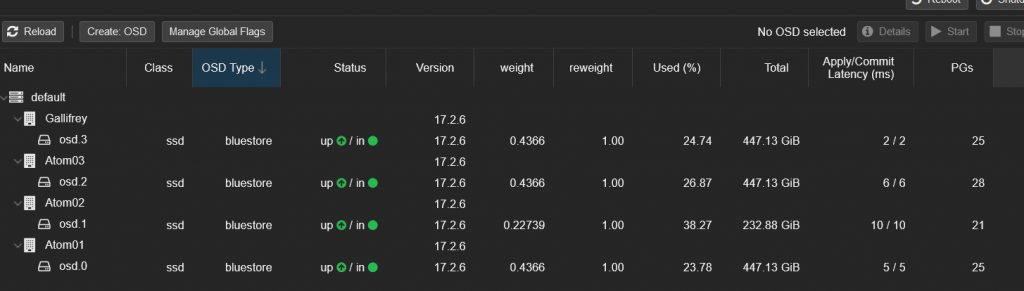

Ceph

Ceph is a free and open-source software-defined storage platform that provides file storage built on a common distributed cluster foundation.

Ceph provides completely distributed operation without a single point of failure and scalability.

Each one of my nodes has 1 SSD assigned to the ceph cluster, this enables my nodes to have this storage pool available when 1 or 2 nodes go offline and keep the containers and VM’s running, giving me a good high availability cluster.

Ceph also gives the ability to dynamically resize the storage pool by adding or removing disks at any time.

ZFS

I use ZFS over EXT4 for the boot drives mainly for the error detection feature, using consumer drives has bitten me in the past and I would like to avoid data corruption as much as possible now..

For bulk storage, I use ZFS Raidz1 for my current pool of 4x 6TB drives.

I’m planning on creating a new pool with 5x 12TB drives with Raidz2.

I use HBA cards with ZFS instead of hardware raid so I can read the smart data from all drives and have error correction for maximum data protection. In the past I had major problems with hardware raid where 1 of 4 SSD’ss was overheating and giving bad data, but because I was using raid 5 with a raid controller I could not see the problems and the card could not pick out the bad data, this lead to the system getting corrupted 3 times over the course of a couple of weeks, I suspected a software issue since it kept getting corrupted when doing a particular action, the system kept running but would refuse to start any new VM’s. after I reinstalled the OS a 3rd time and it corrupted again I pulled all the SSD’s and found 1 was bad and the raid controller did not catch this.

Backup solution

For my backup system I follow the 321 rule.

3 copies of the data

2 different media

1 offsite copy

I hope to add an offline/air-gapped datastore in the future.

Onsite backup

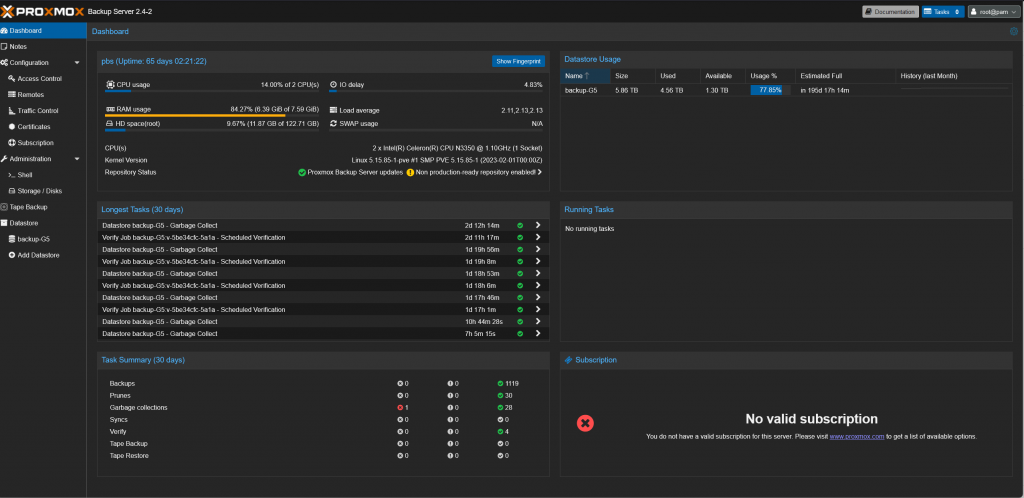

Every day an encrypted backup is made on a separate onsite server.

This node uses the same single-board computer as the Atom nodes in my cluster.

nu691 single-board computer

CPU: intel N3350 dual core Celeron

Memory: 8GB

Storage: 128GB M.2 (boot drive) + 6TB HDD (datastore)

Network: 2x 1gbe with RR link aggregation

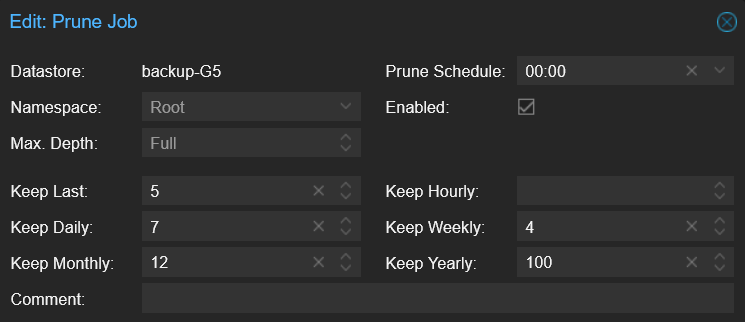

Proxmox backup server makes incremental compressed backups to save a lot of storage space. Using the prune settings shown below I can go back to old backups in case I need to look at old data without taking up too much space.

A verification job is done weekly to check data integrity and avoid bit rot.

Over the span of a year with daily onsite and weekly offsite backups, of ~10Vm’s and ~35 LXC containers I have had 1 backup image experience data corruption.

Sadly this image had to be discarded. But because I have daily backups this poses no issue.

Overall this system has been very reliable and stable and I trust my data to be safe.

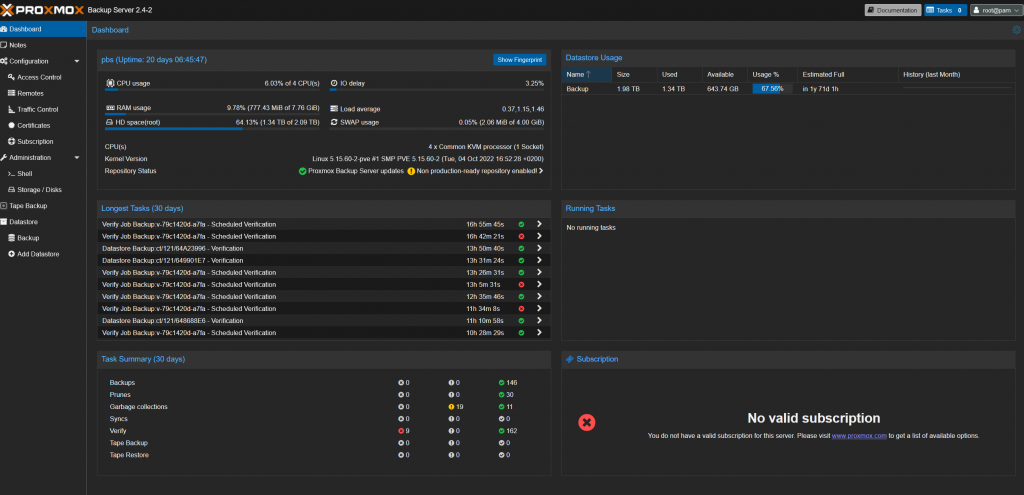

Offsite backup

One of my colleagues at work also runs his own homelab and this creates the perfect opportunity for both of us to have a cheap but reliable offsite backup system.

Both of us created a VM on our main server with Proxmox backup server running on it.

The VM’s get a 2TB disk to boot from and use as a backup datastore.

Access is given via port forwarding and protected with a strong unique password.

Every backup is encrypted with an encryption key unique for this storage location.

Because of the encryption and simple but safe connection method, either site should not be affected in case the other gets compromised.

Backups are run weekly instead of daily because of the lower bandwidth over the internet.

Verification, pruning and garbage collection are also run weekly.

Because of the more limited storage, less data is kept and more strict pruning is performed, and less important data is only stored on the onsite backup server.

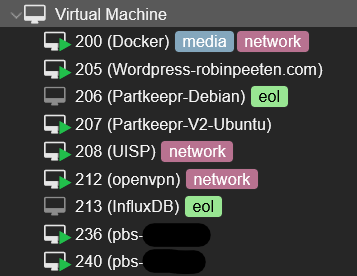

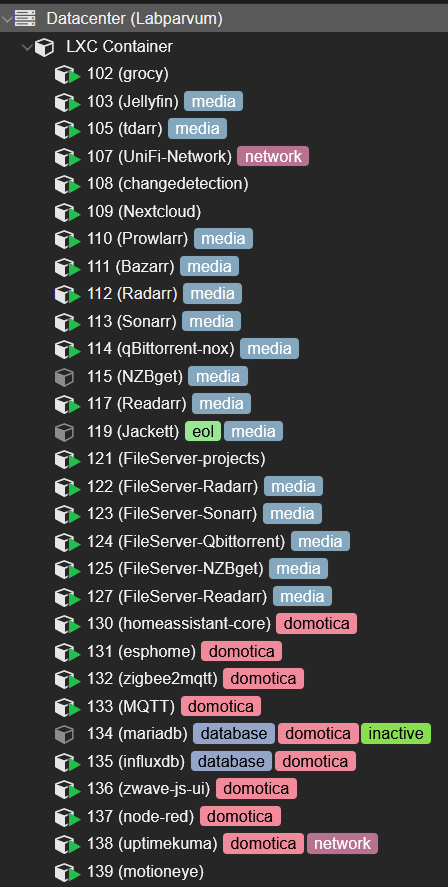

VM vs Docker vs LXC container

I use a combination of virtual machines, docker containers, and LXC containers.

However, I stopped making new docker containers and will probably completely switch over to LXC in the future for the reasons listed below.

VM

I only use VM’s incase I need extra security and isolation, or if the application is more complicated to get running in a Container.

DOCKER

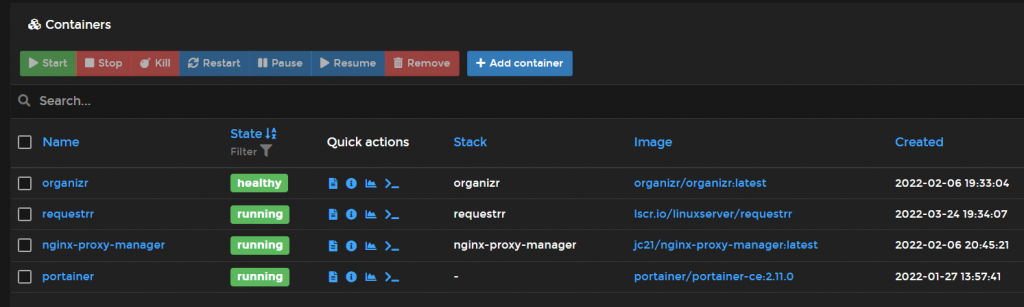

I started out with Docker because this is a proven industry solution for containers.

But I almost completely switched over to only using LXC containers.

Docker is great for isolating 1 application very efficiently, but LXC has better flexibility in my opinion.

One issue with Docker that also drove me to switch is that LXC is natively supported by Proxmox while Docker needs to be virtualized.

Virtualizing it in an LXC while using ZFS storage like I am is impossible.

And running it in a VM seems counterintuitive to me and uses more recourses then I would like.

Docker still has some advantages over LXC like even lower resource usage per application and better scalability, but these are less applicable to my hobby homelab environment.

I only have a few docker containers running now.

LXC

Linux Containers is an operating-system-level virtualization method for running multiple isolated Linux systems on a control host using a single Linux kernel.

This way I get a fully isolated operating system like with a VM, but while still using the same kernel as the host, meaning the recourse usage is very low.

LXC containers are also natively integrated into proxmox, so using and deploying them is extremely easy.

This also makes migrating individual containers to other nodes easier.

The container itself has a boot time of only a few seconds, however the application boot time is in my experience slower compared to docker. The total boot time greatly depends on the application though. Most of my applications are online within 30 seconds, some even 5-10 seconds.

Power consumption

Because power costs money and a server rack tends to require a lot of power I do my best to reduce this as much as possible.

I started this hobby using old/cheap enterprise hardware, but those tend to use quite a lot of power, my old main server would consume ~200W in idle on its own.

My current power consumption for my whole rack is ~200W

I achieved this by using more power efficient consumer hardware like the Ryzen 3600 cpu in my main server and using low power single board computers for my secondary nodes and backup server.

Reducing the number of hard drives also saved me a few dozen wats.

Having a large NAS with many drives is very cool of course, but by using larger capacity drives I reduced the number of drives I need from 12 to 4, reducing the power consumption by around 50-70 watts. saving €160-200 a year I should break even in ~3 years on this investment while also getting a small storage space improvement.

To further reduce the cost of this hobby I also made a small solar panel installation that powers this server rack and my workstation/bedroom.

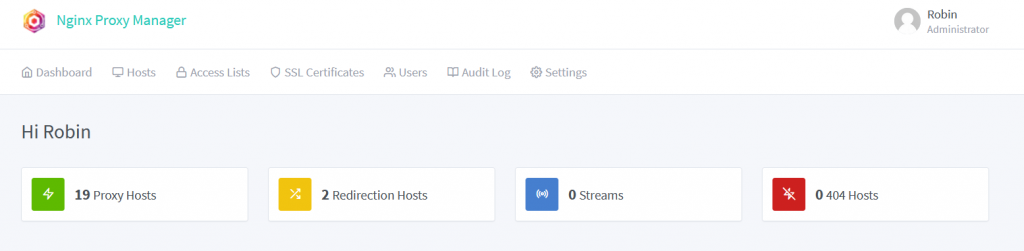

Reverse proxy manager

To secure all connections between clients and servers(WordPress,Jellyfin,…) I use a reverse proxy so all data is encrypted and uses a valid SSL certificate.

There is a great nginx reverse proxy manager for this that I run on in a docker container.

Network

For better speed and reliability, The modem of my isp has all functions disabled like wifi and routing and only acts as a modem with mac passthrough to my Ubiquiti edgerouter lite

The edgerouter lite gives me plenty of performance for a good price, being able to handle many simultaneous connections without a noticeable increase in latency or decrease in speed. it has no problem fully utilizing my 40/400 connection with a stable ping at all times while also handling quite a bit of traffic between lan1 and lan2